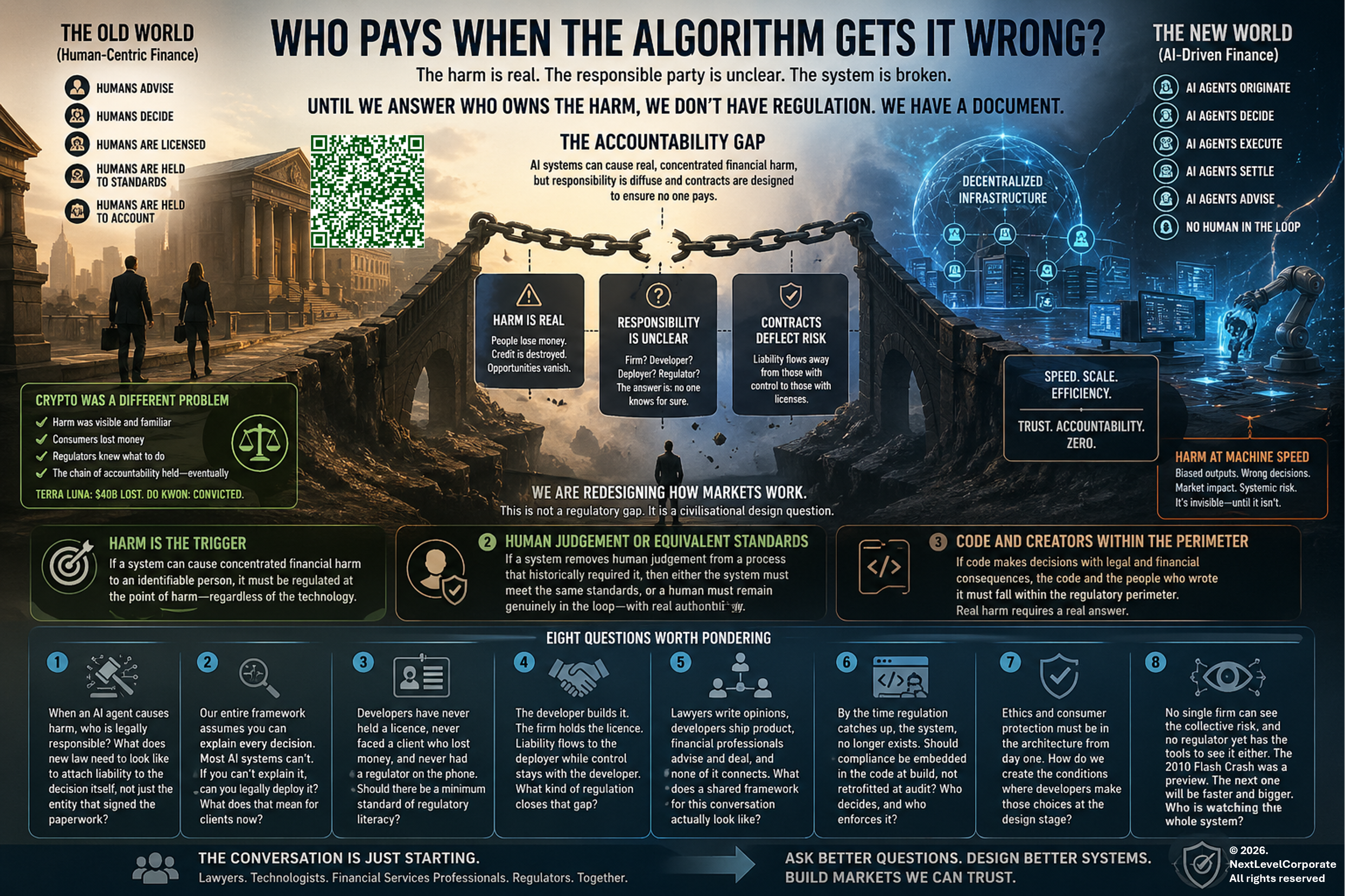

Who pays when the algorithm gets it wrong?

“The Agentic Civilisational Divide”. (c) 2026. Prompt by NextLevelCorporate. All rights reserved. Image generated by AI.

TL; DR

With Mythos-class models now operating autonomously inside the same infrastructure that underpins financial markets, one question has stopped being theoretical.

Who owns the harm?

It's a question sitting at the centre of every conversation I've been having lately about AI, fintech and financial services, and nobody has a clean answer to it yet.

That sounds like a narrow legal question. It isn't. It's the foundational question and it's steeped in questions that are really about civilisational design.

Every regulatory system ever built starts from the same place. Someone is harmed, someone is responsible, someone pays. That chain is what gives regulation its teeth and if you try to remove any link in that chain the whole thing collapses into guidelines and good intentions.

But with AI in financial services, that chain is broken before you even start. The harm is real. The responsible party is genuinely unclear, although the EU AI Act's regulatory focus on developers and providers when dealing with high-risk AI systems provides a clue. Still, the contractual architecture surrounding these systems has been deliberately designed to ensure that whoever might pay, doesn't.

So you can write all the regulation you like. But if you haven't first answered the question of who owns the harm, you haven't built a regulatory system. You've built a document.

Today let's discuss how we make sure that when we redesign the infrastructure of financial markets around AI and agentic systems, we don't accidentally engineer harm into the foundations..

Crypto was a different problem

With crypto the harm was visible and familiar. People lost money. Exchanges collapsed. Fraud occurred. Regulators knew what to do because the harm looked like harms they had seen before, just in a new wrapper. Consumer protection was the right lens because consumers were being hurt in recognisable ways. The Terra Luna collapse wiped out forty billion dollars. Do Kwon eventually went to prison. The chain held, eventually, even if it took years.

AI is different because for most of its existence the harm has been invisible or diffuse. A model producing biased output. A system making subtly wrong recommendations at scale. A chatbot giving bad advice to someone who didn't know to question it. Nobody loses their house in a single moment. The harm accumulates quietly across millions of interactions, and nobody connects the dots.

The regulators weren't slow because they were asleep. They were uncertain because AI arrived wrapped in a browser, free of charge, available to everyone, and the harm wasn't obvious. When something is free and frictionless the consumer protection instinct doesn't fire in the same way. Where is the harm in a chatbot? Where is the victim?

The threshold changes everything

The moment AI crosses into identity and money, something fundamental shifts. That is the threshold where diffuse harm becomes concentrated harm. Where invisible becomes visible. Where "the model got it wrong" becomes "I lost my savings" or "my credit was destroyed" or "my business didn't get the loan it needed."

Mythos makes this concrete. If Anthropic's most capable model can autonomously identify and exploit vulnerabilities inside the same systems that hold deposits, clear trades and price risk without a single human in the loop, the crossing of that economic blood-brain barrier is no longer a metaphor. It’s a technical capability that exists today, in limited circulation, and the financial infrastructure on the other side of it was not designed with that adversary in mind. You simply can’t see a digital peg in a physical hole.

Essentially, we’re talking about anything that crosses the economic blood-brain barrier.

And when you combine AI with blockchain and genuinely agentic systems, the question stops being about harm to individuals and starts being about whether humans are in the system at all.

That is not a hypothetical. You can theoretically build a financial market infrastructure today that originates, prices, executes, settles and advises on transactions without a single human making a single decision at any point in the chain. And the efficiency case for this is compelling as I’ve pointed out in my Autonomics white paper.

Still, the trust case for it is essentially zero, at least right now and that’s because we have no framework for knowing whether we can rely on it, no way to audit it in real time, and no clear answer on who answers for it when it fails.

Mythos, as an example, is not a hypothetical stress test. We are told it has found critical vulnerabilities in every major operating system and web browser. These are flaws that had survived decades of human review and millions of automated security scans. That capability exists now, held by a small number of organisations with imperfect access controls and no liability framework that reaches the people who built it. That is what a trust deficit with real consequences looks like.

We are redesigning how markets work

What we are really grappling with is not a regulatory gap. It’s a civilisational design question. And it doesn’t matter whether it’s a fully or partially autonomic economy. It’s about the interconnected roles and the societal, and economic contributions of human, machine, and stateless code. And who is on the hook when the boundaries between them collapse.

The institutions, laws, professions and market structures that govern finance were all built around human agency. Humans advise. Humans decide. Humans are licensed, held to standards, and held to account. The systems we are now building exhibit apparent agency without possessing it. They act like decision makers without being decision makers in any legal or moral sense. And we are asking frameworks built entirely around human accountability to govern entities that are neither human nor accountable in any meaningful existing sense.

I think the answer to what needs to be regulated has at least three parts, and maybe more.

First, if a system can cause concentrated financial harm to an identifiable person, it needs to be regulated at the point of that harm regardless of what technology produces it. The harm is the trigger, not the tech.

Second, if a system removes human judgement from a process that has historically required licensed human judgement, then either the system itself needs to meet the standards that human was required to meet, or a human needs to remain genuinely in the loop. Not as a rubber stamp. As a real check with real authority and real accountability.

Third, and this is the hardest one, if the code itself is making decisions that carry legal and financial consequences then the code and the people who wrote it need to fall within the regulatory perimeter. Not because the code is a person. But because the harm it causes is real, and real harm requires a real answer.

What this means in practice

In financial services in most developed economies, we’ve spent decades building a trust infrastructure. Licensing, disclosure, dispute resolution, compensation schemes, conduct obligations. It is imperfect and often unharmoniously stumbles when straddling borders, but generally it works because it rests on the assumption that somewhere in the chain there is a human being who can be identified, questioned and held responsible.

Agentic AI and blockchain-based market infrastructure challenge that assumption at its root. If we let that infrastructure be hollowed out without replacing it with something equally robust, we will not just have a regulation problem. We will have a market that only some participants understand and none of them are on the hook if it goes wrong.

We are at the beginning of this, not the end. The EU is ahead, with the EU AI Act which seeks to regulate high risk AI activities in the EU, regardless of where they emanate from. The Act imposes the majority of obligations on the providers (developers) as distinct from the deployers (users). However, this is not financial markets specific.

Elsewhere, the conversations are just starting. The regulation is years behind. The developers are shipping product today that will be in regulated environments tomorrow. And the lawyers, the technologists and the financial services professionals who all need to solve this together are largely still working in separate rooms.

These are the questions I think we all need to sit with. I don't have clean answers to any of them. But I think asking them out loud, together, is where it starts.

And industry involvement, education, and financial services advocacy and systems built so that all stakeholders can access the same room or sandbox, are mission critical for anything that cross the identity/money threshold.

Eight questions worth pondering

When an AI agent causes harm in financial services, nobody knows who is legally responsible. Not the firm, not the developer and not the regulator. What does new law need to look like to attach liability to the decision itself, not just the entity that signed the paperwork?

Our entire regulatory framework assumes you can explain every decision. Most AI systems can't. If you can't explain it, can you legally deploy it? And if AI is already embedded in decisions across financial services without meeting that standard, what does that mean for the clients on the receiving end?

The developers building these systems have never held a licence, never sat across from a client who lost money or suffered financial loss, and never had a regulator on the phone. Should there be a minimum standard of regulatory literacy before their products enter licensed financial environments?

The firm that builds the AI and the firm that holds the licence are two different entities. The liability flows to the deployer while the control stays with the developer. Contracts don't close that gap. What kind of regulation does? Is the EU AI Act the right model?

Lawyers are writing opinions, developers are shipping product, and financial services professionals are advising and dealing, and none of it connects. The person paying for that disconnection is the client. What does a shared framework for this conversation actually look like?

By the time regulation catches up, the system it was written for no longer exists. Should compliance be embedded in the code at build, not retrofitted at audit? And if so, who decides what that looks like and who enforces it?

Ethics and consumer protection can't be patched into a system after it's built. They have to be in the architecture from day one. How do we create the conditions where developers make those choices at the design stage rather than the compliance stage?

No single firm can see the risk they're collectively creating and no regulator yet has the tools to see it either. The 2010 Flash Crash was a preview of what correlated algorithmic behaviour looks like at scale. The next one may not need a fat finger or a feedback loop. It may need only a model operating at compute speed, autonomously chaining small weaknesses across interconnected protocols, in a market structure that was never designed to detect it, let alone stop it. Mythos has already shown that capability exists, so the question is not whether a system like this could destabilise a market, rather, it’s whether we will have built anything capable of catching it before it does.

“The Civilisational design divide”. (c) 2026. NextLevelCorporate. Prompted by NextLevelCorporate, generated by AI.

End notes

This article builds on several other pieces I’ve written at the intersection of AI, blockchain, robotics, taxes, autonomics and the next system of money and credit.

In particular, one of the more contentious civilisational design issues allied to the above is the question of who will pay taxes to keep governments functioning when Agentic systems displace enough income tax paying individuals and corporate tax paying firms? You can get a quick refresher here.

If you’re grappling with (or solving) these pain points, let’s start a conversation. DM me any time, especially if you’re a fintech developing agentic rails and/or application layer solutions that cross the identity-money barrier, whether a product, service or something that’s yet to be defined.

Good luck and see you in the market 🖐

Mike

With decades of success across six continents, NextLevelCorporate helps you navigate the intersection of M&A, financial advisory, and business strategy —delivering macro-aligned corporate development strategies and the transactions that bring them to life.

All content is copyright NextLevelCorporate. NextLevelCorporate and logo are registered trademarks.